ML in medicine: opportunities and threats

Artificial intelligence in medicine is an area that is constantly evolving and is on an upward trend. Precise analysis of human anatomical structures or automatic localization of inflammation or cancer are some of the many examples of symbiosis between man and machine. Doctors primarily use artificial intelligence in medicine for imaging diagnostics like MRI (resonance) and CT (computed tomography).

The development of predictive analytics in healthcare using machine learning tools and techniques in medical algorithms has made it possible to detect health problems early. They significantly facilitate the work of many scientists, but also doctors, supporting their decision-making processes in their daily clinical work.

Predictive analytics in healthcare using machine learning tools and techniques: image recognition

Image recognition technologies are based on the fact that algorithms first learn what a healthy image of an organ looks like. They then detect potential lesions or cancer. With the right amount of database to train, these algorithms can effectively recognize anomalies and mark places on a given organ that require further diagnostics. This process is called segmentation or area of interest identification.

Artificial intelligence (AI) revolutionizes medical imaging, transforming it from image detection to initial or targeted diagnosis. This is achieved through various machine learning and deep learning algorithms that AI utilizes. These algorithms empower radiologists by automating some of their work processes. If this topic is interesting to you, we recommend you to read our case study about custom automated and semi-automated algorithms to liver and liver tumor segmentation and analysis.

AI in the daily work of medics: survey conducted on patients in the US

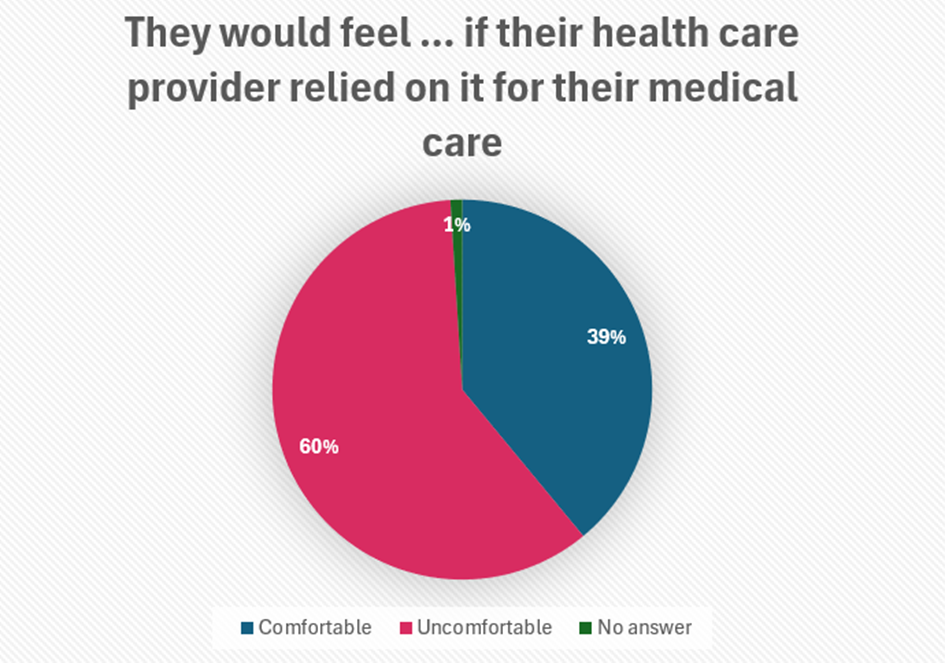

A survey conducted by the Pew Research Center explored the public’s views on artificial intelligence in health and medicine — an area where Americans may increasingly encounter technologies that perform things like screening for skin cancer and even monitoring a patient’s vital signs.

The survey found that respondents feel significant discomfort with the idea of using AI in their healthcare. Six in ten U.S. adults say they would be uncomfortable if their healthcare provider only relied on artificial intelligence to diagnose illnesses and recommend treatment. Only 39% of those surveyed said they would feel comfortable doing so.

The results of this survey, although not fully ideal for artificial intelligence, raise some hopes. The acceptance rate will likely increase year by year. The more accurate solutions are used, the greater the public trust.

Source: Own study based on pewresearch.org [1]

AI for patient comfort: what are the prognosis?

The world of medicine is facing many challenges. One of them is staff shortages and thus rising costs of services. The WHO forecasts that by 2030 there will be a shortage of up to 9.9 million doctors, nurses, and midwives.

While AI offers itself as a solution to the identified problems, implementing it widely in hospitals and clinics proves complicated. The key to the sustainable introduction of artificial intelligence to medical entities is to ensure maximum safety for all elements of the chain.

Misinterpreting patient results by artificial intelligence could create a real threat to life and make it difficult to determine who is responsible. After all, the algorithm can’t be held responsible for the consequences of a misdiagnosis. Unfortunately, medical errors in the USA cause as many as 250-440 thousand deaths every year. It’s worth highlighting that another area needing significant improvement is predictive analytics in healthcare, which uses machine learning tools and techniques.

Potential problems in AI development: data

The Massachusetts Institute of Technology created a study that identifies humans as the main obstacle in developing artificial intelligence. The culprit? The potential defectiveness of the data we provide.

Marzyeh Ghassemi, an MIT assistant professor who has long researched healthcare technology, wrote an article published on January 14, 2022, in the journal Patterns. She argues that using AI carefully can improve healthcare efficiency and potentially reduce inequalities. However, the study also reveals that AI models can generate worse outcomes for less represented groups.

One of the challenges may be the imbalance of data in terms of gender or skin color. If the information is collected mainly from men, the algorithm may lose accuracy in modelling similar cases in a group of women. On the other hand, an algorithm that “learns” on people with fair skin will perform worse in the diagnosis of dark-skinned people. Non-objective results are a very high threat, which can lead to misguided reconnaissance and improper determination of the therapeutic process.

Developing accurate and efficient segmentation algorithms for medical images remains a challenging task, mainly due to the limited availability of publicly accessible datasets with high-quality annotations. However, due to the significant interest in this field, there is a growing trend of data sharing to further accelerate the development of algorithms for medical imaging analysis. A recent dataset “Sparsely Annotated Region and Organ Segmentation (SAROS)” published in Nature is a prime example of this. It includes 13 semantic body region labels and 6 body part labels on top of 900 CTs from the The Cancer Imaging Archive (TCIA) to maximize reusability for other research groups.

Summary: what’s the point

Finally, it is worth asking yourself: Does machine learning allow for early detection of health problems? In our opinion, as experts creating medical algorithms, the answer is definitely yes. Despite the challenges that face the correct training of the algorithm. Healthcare professionals convince us we shouldn’t be scared of AI in our daily lives.

All research on the development of artificial intelligence has one common denominator. Namely, it is about access to large training data sets. Artificial intelligence tools train themselves by processing and analyzing huge amounts of data. People create these, but they are fallible and their judgments can be clouded. An additional problem is also the fact that many institutions do not want to share them.

To sum up, machine learning techniques can be effective as long as the quality, quantity, and representativeness of the data used for training modelling allow. Failure to complete all these functions translates into the possible bias of AI algorithms and, as a result, a high risk of generating much worse quality results. That is why it is so important to ensure the appropriate and good quality of the data used to train the algorithm.