Machine Learning in Endoscopy Image Analysis: Enhancing Diagnostic Accuracy and Efficiency

The integration of machine learning into endoscopic image analysis has dramatically raised the diagnostic capabilities in gastroenterology. This article delves into how machine learning technologies have application across various subfields. Including quality assurance, detection, segmentation, and classification within endoscopic imaging.

Quality Assurance in Endoscopic Image Analysis

Quality assurance (QA) is the basic step in the processing and analysis of endoscopic images. During typical procedures, cameras generate hundreds of frames per minute, often over 25 frames per second. Handling such a large volume of data manually is not only impractical but also prone to errors. Machine learning, particularly deep learning, significantly enhances QA by filtering out non-informative, redundant, or blurred images, thus focusing exclusively on high-quality, relevant frames.

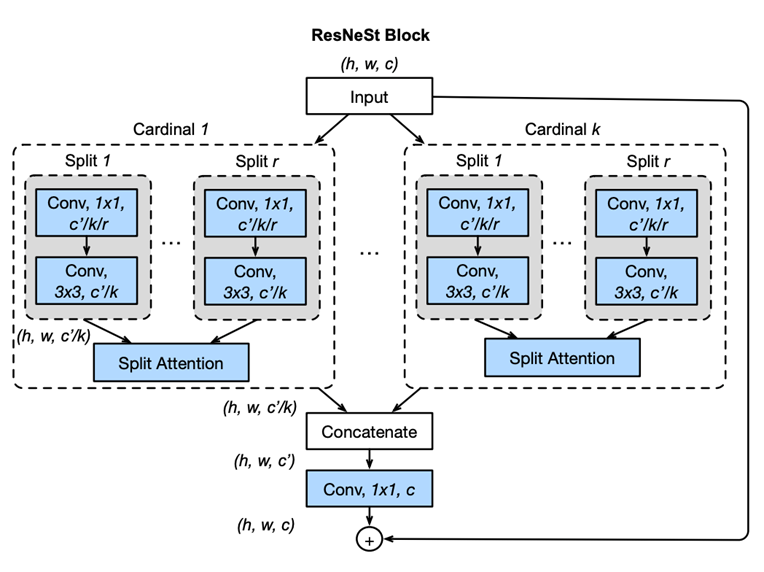

In the study titled “Development and validation of a deep learning algorithm for colonoscopy quality assessment,” Chang et al. (2022) used the ResNeSt network (fig.1) —a variant of ResNet that incorporates Split-Attention blocks—to automate the classification and selection of high-quality frames from endoscopic video streams. Their model was trained on a diverse array of datasets consisting of thousands of video frames, each manually annotated to identify characteristics of low-quality images such as blurriness, underexposure, and irrelevant content. This automation significantly reduces the volume of data that requires manual review, thereby raising diagnostic efficiency. The study emphasizes the benefits of using varied sources of datasets, which bolster the model’s ability to generalize, adapting effectively to specialized medical imaging tasks (PubMed).

Fig.1 Diagram of ResNeSt block/model proposed in the paper “ResNeSt: Split-Attention Networks” [1]

Detection in Endoscopic Imaging

Object detection is a task in which the searched objects are precisely located and categorised in the input data. Most often images. This task is commonly accomplished using specialized models such as YOLO [2], M2Det [3], or Faster R-CNN [4] among others. “In endoscopic imaging, the primary focus is identifying changes in tissue or mucosal conditions. This task involves the use of advanced deep learning models for texture anomaly detection.

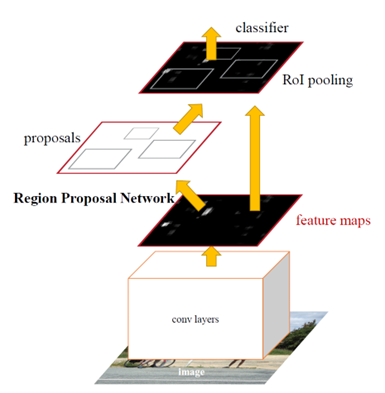

In the paper “Development of a real-time endoscopic image diagnosis support system using deep learning technology in colonoscopy,” Yamada et al. (2019) used a lesion detection model based on Faster R-CNN with a VGG16 backbone. This combined model integrates a classifier for lesion detection and a regression model for accurately positioning lesions. Trained on a robust dataset of endoscopic images featuring various types of polyps, the model learns to identify a wide range of polyp appearances under different lighting and textures. Optimizations in the model architecture allow for real-time processing, suitable for live diagnostic use during endoscopic examinations, significantly enhancing the early detection of lesions [5].

Fig.2 Faster R-CNN model proposed in the paper Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks [6]

Segmentation in Endoscopic Imaging

Segmentation refers to the process of partitioning an image into multiple segments. Use to simplify or change the representation of the image into something more meaningful and easier to analyze. In the context of image analysis, this technique is used to locate objects and boundaries. By labelling every pixel in the image with a corresponding class of what is being represented.

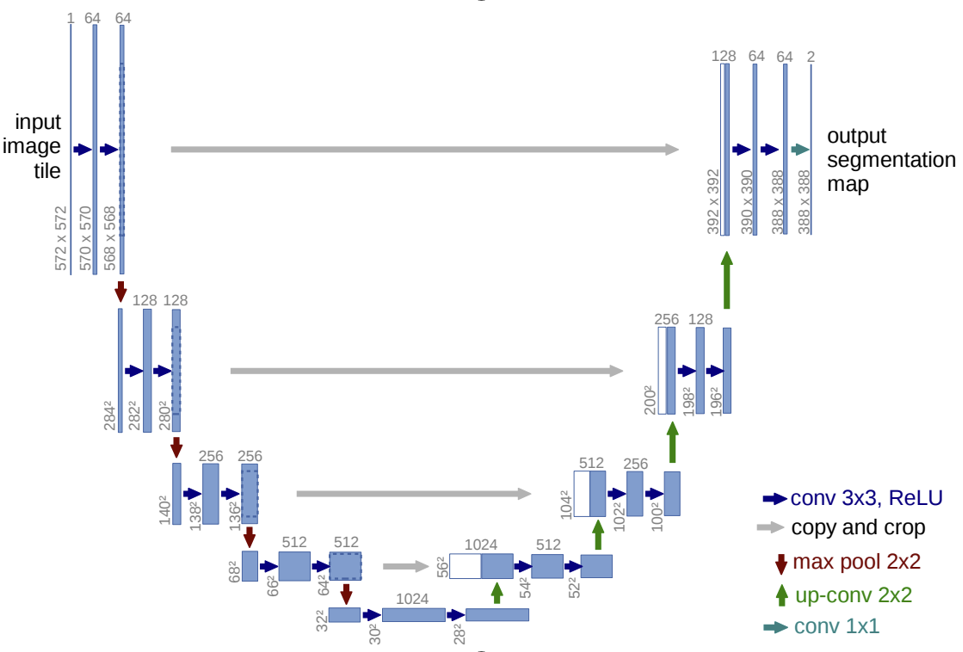

Segmentation in endoscopy goes beyond detection by precisely delineating regions of interest, such as mucosa changes, bleeding areas, or polyps. This task requires sophisticated architectural designs like FastFCN [7] or U-net [8], among others, to accurately delineate objects and structures. Yue et al. (2022) advanced this field significantly by employing LFSRNet, an encoder-decoder architecture that includes lesion-aware feature selection and refinement modules. The LFSRNet uses a non-local attention mechanism to select important features from the top layers of the encoder and refine them throughout the decoder using guided context information.

Their approach demonstrates superior generalization capabilities across various datasets, thus enhancing its applicability in clinical settings. This precise segmentation allows for better assessment and treatment planning, particularly in quantifying lesion size and morphology (ScienceDirect).

Fig.3 U-net architecture by Ronneberger et al., “U-Net: Convolutional Networks for Biomedical Image Segmentation” [9]

Classification in Endoscopic Imaging

Classification is one of the most common tasks in machine learning, including both multiclass and binary (0/1) formats. The choice of models for classification typically depends on the type of input data. In endoscopy, classification tasks are frequently realized using images to either classify objects. Such as different types of polyps, or to assess disease severity. Both of which are crucial for effective patient management.

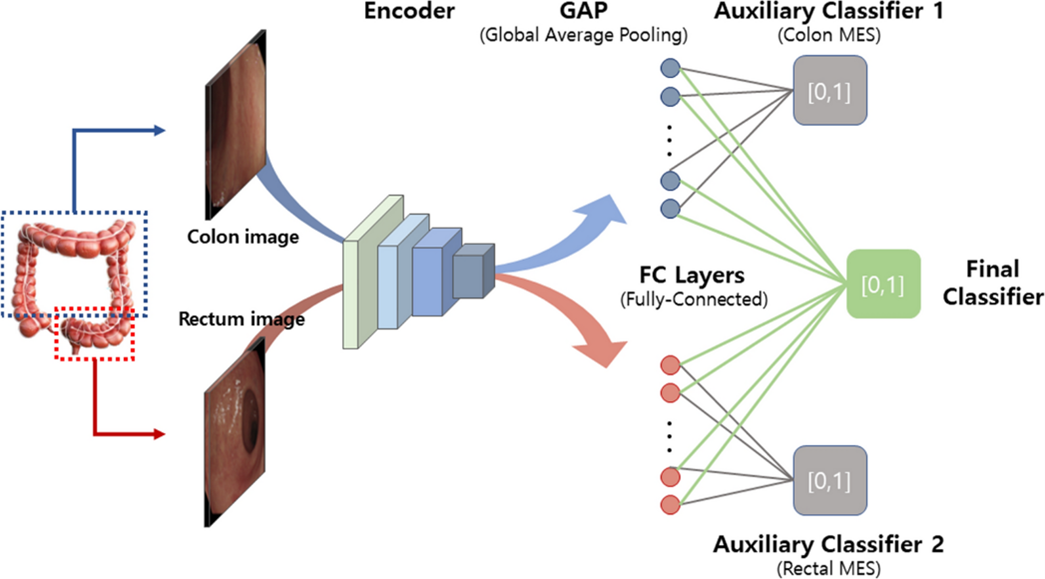

Kim et al. (2023) contributed to this field by developing a deep learning model to classify endoscopic images according to the severity of ulcerative colitis, using the Mayo score as a benchmark. Their model includes a CNN-based encoder and dual auxiliary classifiers for the colon and rectum, which effectively recognize visual patterns associated with various levels of disease severity. Specifically, the model addresses a critical issue in distinguishing between Mayo scores 0/1 and 1/2, which is particularly challenging due to the subtle differences in visual indicators associated with these scores.

This approach provides a non-invasive way to monitor disease progression. And aids in personalizing treatment plans based on the predicted severity of the condition, offering significant clinical value in managing ulcerative colitis (Nature).

Fig.4 CNN-based encoder with dual auxiliary classifiers proposed in the “Deep learning model for distinguishing Mayo endoscopic subscore 0 and 1 in patients with ulcerative colitis” [10]

Conclusions

In summary, machine learning deeply transforms endoscopic imaging across various diagnostic tasks, changing gastroenterology. Leveraging advanced technologies equips clinicians with deeper insights into patient conditions. It leads to improved diagnostic accuracy and more personalized treatment strategies. Additionally, these algorithms quality assurance, make detection, segmentation, and classification. As machine learning models continue to evolve, their integration into clinical practice is poised to become even more common. Moreover, they will be shaping the future of medical imaging in gastroenterology.

References

[1] “ResNeSt: Split-Attention Networks”: https://arxiv.org/pdf/2004.08955v2.pdf

[2] https://arxiv.org/abs/2004.10934

[3] https://arxiv.org/pdf/1811.04533.pdf

[4], [6] Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks, Fig. 2, https://arxiv.org/pdf/1506.01497.pdf

[5] https://www.nature.com/articles/s41598-019-50567-5#MOESM1

[7] https://arxiv.org/abs/1903.11816

[8], [9] U-Net: Convolutional Networks for Biomedical Image Segmentation, Fig. 3: https://arxiv.org/abs/1505.04597

[10] Deep learning model for distinguishing Mayo endoscopic subscore 0 and 1 in patients with ulcerative colitis: https://www.nature.com/articles/s41598-023-38206-6